If your organization is deploying AI agents, fine-tuning open-source models, or piping third-party data into production systems, the security ground beneath you has shifted. Traditional perimeter defenses weren’t built for a world where autonomous agents make decisions at machine speed and a single poisoned dataset can quietly bias outputs for months. That’s why we’ve published our latest in-depth guide, and we’re excited to share it with you today.

What’s Inside

Zero-Trust Architecture for AI Supply Chains: The 2026 Governance Playbook walks through exactly how to apply “never trust, always verify” principles across the entire AI lifecycle — from the moment data is ingested to the moment a model returns a response in production.

Inside, you’ll find:

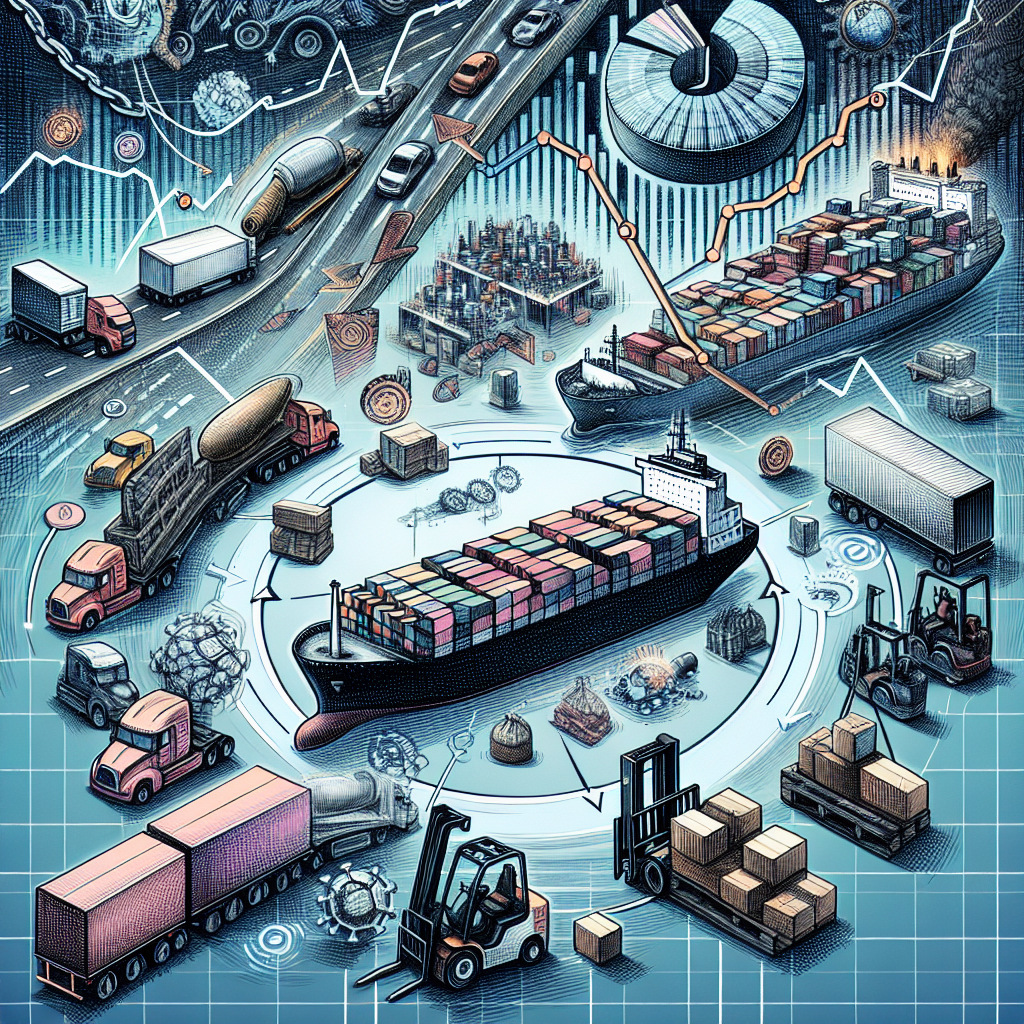

- The five pillars of a Zero-Trust AI supply chain, and what each one looks like in practice

- A phased implementation roadmap your team can start on this quarter

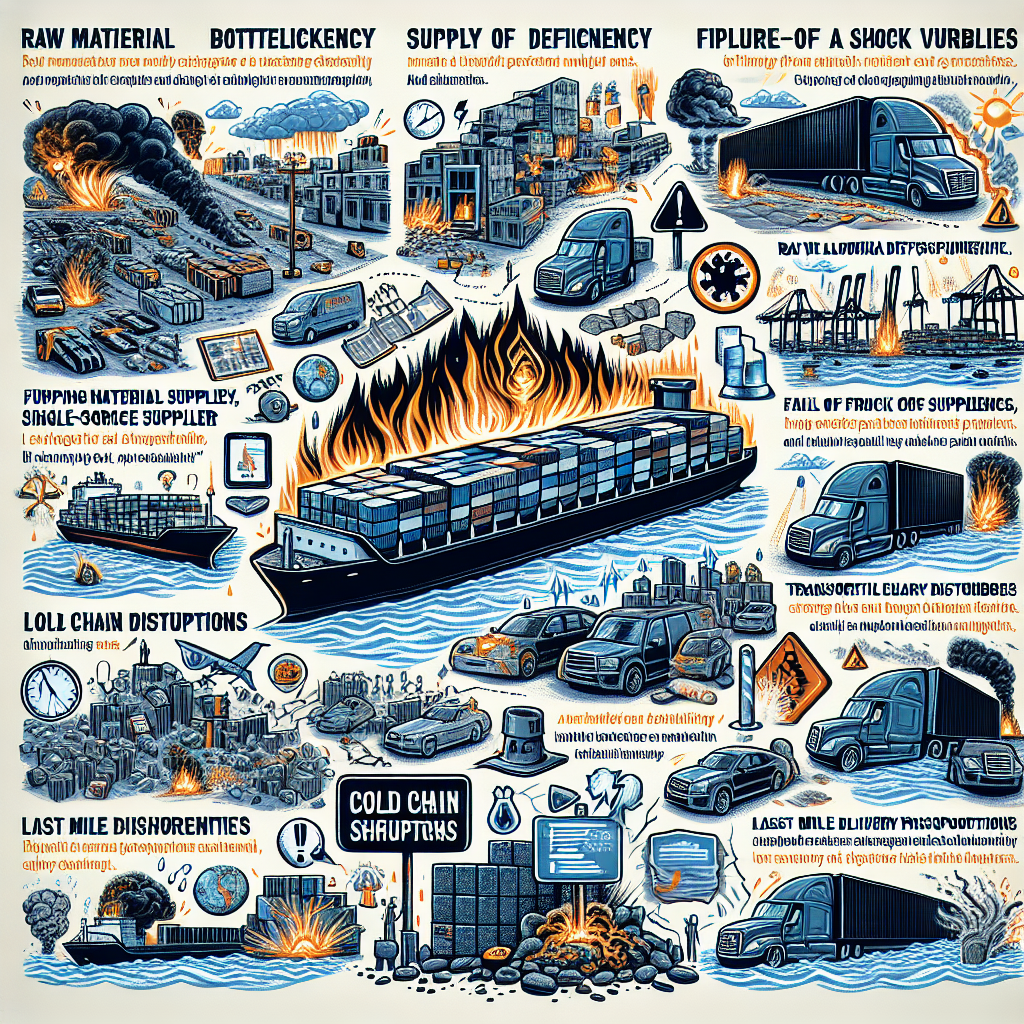

- The specific threats you’re defending against — malicious model infiltration, dataset poisoning, and API vulnerabilities

- A breakdown of the four frameworks shaping the regulatory landscape: ISO/IEC 42001, the NIST AI Risk Management Framework, the Agentic Trust Framework, and the EU AI Act

- Three high-leverage actions you can take Monday morning

Why Now

The EU AI Act’s high-risk obligations are currently scheduled to take effect on August 2, 2026. Independent estimates put initial compliance investments for large enterprises at $8–15 million — and that’s assuming you start now. A proposed deferral to December 2027 is being negotiated in Brussels, but until it’s formally adopted, August 2026 remains the operative deadline. Organizations gambling on an extension are taking on real regulatory risk.

Meanwhile, ISO/IEC 42001 — the world’s first AI management system standard — is rapidly becoming the credential customers, partners, and auditors expect to see. The companies treating AI governance as a parallel innovation track rather than a brake on it are the ones reaching production faster and building the kind of trust that compounds.

Who Should Read It

This guide is written for security leaders, compliance officers, ML platform engineers, and the executives signing off on their AI strategy. If you’re responsible for any of the following, it’s worth your time:

- Approving the use of pre-trained models from public repositories

- Standing up agentic AI systems that act on behalf of users

- Mapping your AI footprint against ISO 42001 or the EU AI Act

- Building the case for AI governance investment to your leadership team

Even if you’ve already started the work, the framework comparisons and the phased rollout plan should sharpen your priorities.

Read the Full Guide

Head over to Zero-Trust Architecture for AI Supply Chains: The 2026 Governance Playbook to dig in. Bookmark it, share it with your security and compliance teams, and let us know what we missed — we update our guides as the landscape evolves, and the regulatory landscape on this one is evolving fast.

The supply chain feeding your AI is now part of your attack surface. Time to govern it accordingly.